Gun Turrets on a Sailing Ship: moving past the dumb first phase of Enterprise AI

Company

Peter Fuller

4 minutes

In September 1870, the British warship HMS Captain capsized in a gale off the coast of Spain. The ship’s designer, Captain Cowper Coles, was a 19th century tech bro. He had mounted new swivelling gun turrets on a full-rigged sailing ship, despite repeated warnings that the resulting design would be top-heavy. When the first serious storm hit, the ship rolled over and took Coles with her.

Every new technology has a dumb first phase. We take the new thing and tack it onto the old system. It’s much harder to redesign systems around new capabilities, but that’s almost always where the value is.

At Tracelight, we are building AI for financial modelling. The dominant paradigm in our space is add-ins to Excel. So far, we and everyone else have been tacking AI onto the old system, like a swivelling gun turret on a sailing ship.

The future belongs to the products and companies that redesign the full stack. Here’s what that might look like in financial modelling.

Bringing the exhibits to life

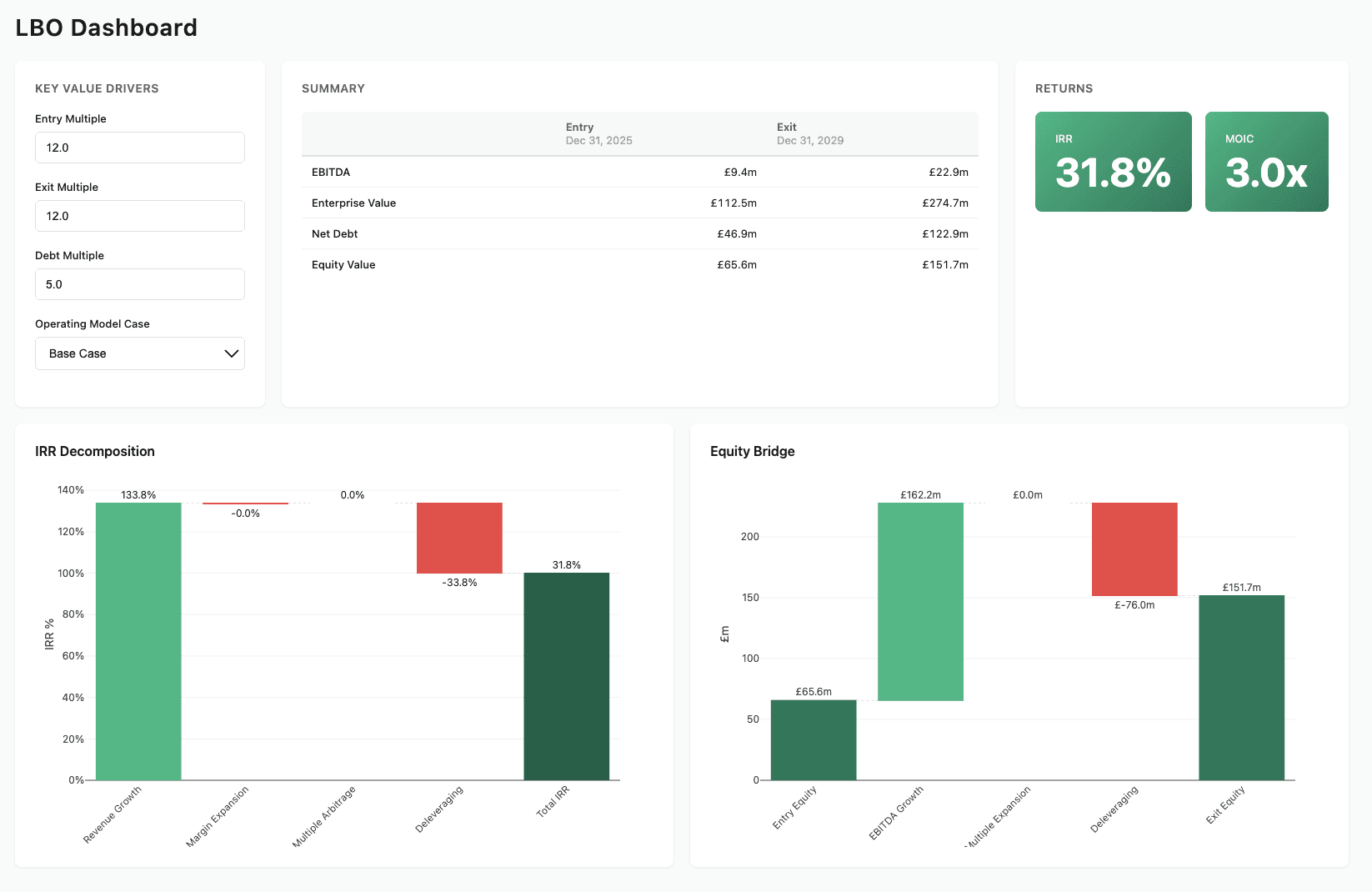

One of the most powerful moments in Tracelight's history was the first demo of this - Link to LBO Dashboard Web App.

This is a web app built from an Excel file. All the numbers are exactly what you would find if you opened the Excel. It was built by Tracelight, with one prompt.

Today, analysts work like museum guides. Their exhibits are Powerpoints and Excels, and every object they create is lifeless. Once the project is finished, the exhibits gather dust.

When we can make an artifact dynamic, we bring it to life. The work will answer questions even if its creators are asleep. Entire iteration cycles can be deleted, and the artifact can become part of a system after the project ends.

For advisory businesses, this new type of deliverable will unlock business models that are untethered from the advisor’s manpower. If your deliverables look more like software, you can charge like SaaS.

The review problem

Right now, almost everyone using AI has a review problem. AI can generate faster than we can validate — we write faster than we read. And the problem is only going to get worse.

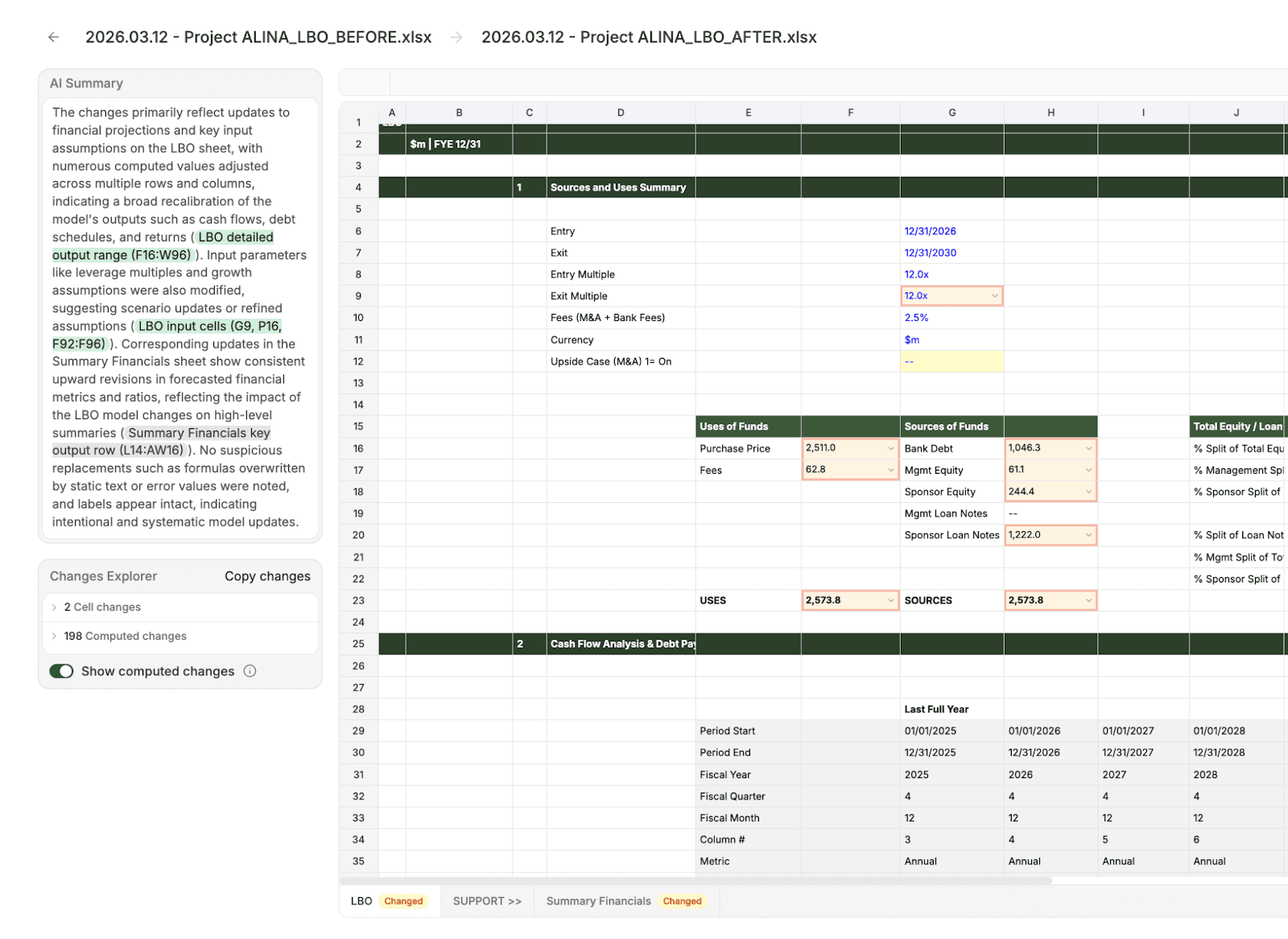

Financial modelling is particularly problematic. There's no structured way to review changes between two versions of a spreadsheet. There are no test suites. Review is slow and manual, and the gap between generation speed and review speed is already wide. Review speed is the bottleneck on everything else. Until review catches up, your gains from AI are capped.

This is a product problem, not an LLM problem. The models are already good enough to do serious work in spreadsheets. What's missing is the verification layer: the tooling that makes review fast enough to keep up.

This requires purpose-built infrastructure: model comparison, versioning, citation trails, higher-level proofs, error-checking. This is what we're building at Tracelight.

The tools aren't ready for the demand that's coming

Analysis is like a pizza-eating contest, where the prize is more pizza. As AI makes analysis a lot easier, the market will simply demand more of it. Questions will become more complex, and analysts will have to look at more data, and create deeper models, faster.

But there's a simple product problem here. Excel wasn't built for the demand that's coming.

One problem is scale. Excel is famously bad at handling large amounts of data. As analysis gets bigger, both in terms of input data and number of operations, this limitation will become a strategic problem.

Another is speed. Decision-makers will want their agents to answer complicated analytical questions in real-time. The barrier won't be LLM token throughput; that's getting solved. The problem will be the speed of the agents' tools. If calculations are happening in the old Excel, users and LLMs will be stuck waiting around.

The answer isn't to abandon spreadsheets for code. Analysts can't review what they can't read. We need to figure out how to overcome the limitations of Excel, but keep expressing the logic in the cells and formulas that human users are familiar with. This needs to get solved if AI is going to transform how analytical work gets done.

Beyond tools

There's a bigger question behind all of this. As AI does more of the cognitive work, what stops a knowledge organisation from becoming a thinning wrapper around an API? Can an investment firm reliably beat ChatGPT? Will a consultancy give better advice than Claude?

The short answer is knowledge. Both the tacit judgment that lives in the heads of senior people, and the proprietary data (benchmarks, playbooks) that no foundation model has access to. There are other moats: humans can shake hands, sign contracts, and take the blame when things go wrong. But the deepest advantage is knowledge.

At some point, this knowledge will need to find its way into organisations' private AI systems. When it does, the AI tools these organisations use will become more like sensory organs and a nervous system. They will do the work, observe real-world results, and learn without intervention.

We have a lot to say about what this looks like, but will save it for another post. For now: this is what the new ship will look like. And since the benefits compound, the sooner organisations start building it, the better.

Contact Us